Turning Audio into Authority: A Workflow for Podcasters in the AI Search Era.

📍 Semantic Summary

- Idea: Podcasters sit on a goldmine of expert content, but raw audio remains largely invisible to Large Language Models (LLMs ) and search engines. To maximize reach, audio must be transformed into highly structured, semantically dense text.

- Challenge: Simply pasting an unedited AI transcript onto a webpage is no longer sufficient. Search engines and AI Overviews require semantic clarity, logical hierarchy, and comprehensive entity coverage to retrieve and cite content.

- Summary: By understanding how LLMs parse text and integrating NEURONwriter into your production workflow, you can identify missing semantic entities and restructure spoken words into authoritative, highly-cited blog posts and show notes.

Read the full guide below, or explore related topics: Podcast SEO in 2026 ·The “15k Token Limit” · Agentic Engine Optimization (AEO)

For podcasters, the microphone is the ultimate tool for capturing expertise. A 45-minute interview can generate over 6,000 words of deep, nuanced insight. The audience for this content is massive and growing: according to the 2025 U.S. Podcast Report by Triton Digital, over 53% of the U.S. population now consumes podcasts on a monthly basis

Similarly, Edison Research’s “The Podcast Consumer 2025” study notes that total time spent with podcasts has grown by an astounding 355% over the past decade

However, if that insight remains trapped inside an MP3 file, its discoverability is severely limited.

While platforms are improving their internal search capabilities, Google and AI-driven Answer Engines still rely heavily on text to understand, index, and cite content. To truly dominate your niche, you must bridge the “semantic gap” between spoken word and machine-readable text.

This guide explores the theory behind how AI search engines retrieve information and outlines a practical podcast SEO workflow using NEURONwriter.

The Semantic Gap: Why Raw Transcripts Are Not Enough

Raw transcripts are unstructured and conversational. They lack the semantic clarity, hierarchical formatting, and specific entities required by LLMs to understand and retrieve the information reliably.

It has become standard practice to use AI tools to generate transcripts. While this is a crucial first step increasing your indexable content significantly a raw transcript is not a finished SEO product.

Human conversation is messy. We use filler words, go off on tangents, and rarely use the exact phrasing that users type into search engines. More importantly, we do not speak in HTML tags.

To understand why this is a problem, we must look at how Large Language Models (LLMs) interpret content. According to analysis by Search Engine Journal, LLMs do not scan a page the way traditional bots do; they ingest it, break it into tokens, and analyze the relationships between words and concepts using attention mechanisms

“They’re not looking for a <meta> tag or a JSON-LD snippet to tell them what a page is about. They’re looking for semantic clarity: Does this content express a clear idea? Is it coherent? Does it answer a question directly?” — Search Engine Journal

LLMs favor content that is logically segmented, consistent in terminology, and presented in a format that lends itself to quick parsing, such as FAQs or defined topic scopes

If you simply paste a raw transcript onto your website, you miss the opportunity to structure the data for Agentic Engine Optimization (AEO).

The Podcast Optimization Workflow.

The goal of this workflow is not to change what you said on the podcast, but to package those insights into a format that aligns with how AI search engines process information.

Generate and Clean the Raw Transcript.

Begin by running your audio through an AI transcription service. Once you have the text, perform a basic cleanup: remove excessive filler words, correct glaring transcription errors, and ensure speaker labels are accurate.

Establish the Semantic Baseline.

Instead of guessing what terminology your episode should include, let data guide the structure.

1. Identify the core topic of your episode (e.g., “B2B SaaS marketing strategies”).

2.Enter this query into NEURONwriter to create a new analysis.

3. NEURONwriter will analyze the top-ranking pages for that topic and extract the exact NLP (Natural Language Processing) terms and entities that define authority in that space.

Score Your Spoken Content.

Paste your cleaned transcript into the NEURONwriter Content Editor.

You will likely notice that despite your expertise, the initial Content Score might be lower than expected. This happens because spoken conversation naturally misses specific industry jargon or related subtopics that competitors cover in structured written guides. NEURONwriter will highlight exactly which Basic Terms and Extended Terms are missing from your transcript.

Engineer the “Information Gain”.

You cannot go back and re-record the episode to include missing keywords. Instead, use NEURONwriter to build structured additions around your transcript that provide the semantic clarity LLMs require

- Write an Optimized Introduction: Craft a 300-word introduction that summarizes the episode while naturally incorporating the high-priority NLP terms suggested by NEURONwriter. This defines the topic scope at the top of the page, which LLMs favor.

- Build an FAQ Section: Use NEURONwriter’s Draft & Outline builder to identify the questions top competitors are answering. Create a dedicated FAQ section summarizing the podcast’s answers using the recommended semantic terms.

- Create Structured Show Notes: Transform conversational tangents into clear, bulleted takeaways. Ensure these takeaways utilize the specific entities NEURONwriter identifies as crucial.

Format for Machine Readability.

AI agents and search crawlers rely on structure to understand hierarchy. Ensure your page is formatted perfectly:

- Wrap your episode title in an <h1> tag.

- Use <h2> tags for major topic shifts within the episode.

- Use <h3> tags for specific questions or sub-points.

As noted in industry research, clean structure is now essentially a ranking factor in the AI citation economy.

Turning One Episode into a Content Cluster.

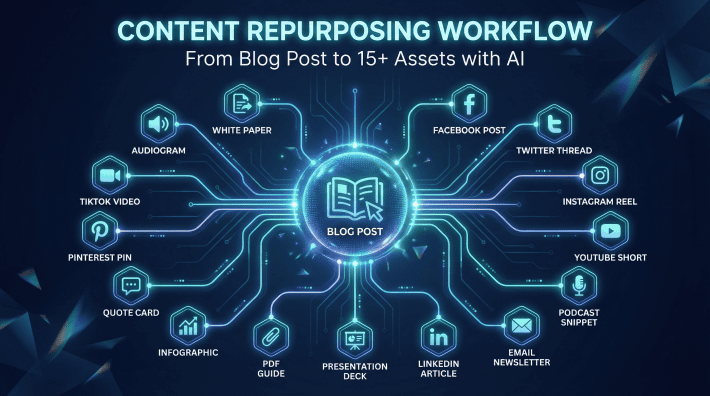

A single podcast transcript can serve as the foundational “Hub” for a broader topic cluster, allowing you to spin off multiple, highly-targeted articles that build topical authority.

If your podcast episode was a deep dive into a broad topic, you can execute a content repurposing workflow.

For example, if your 60-minute interview covered “The Future of E-commerce,” the transcript might touch on AI shopping agents, supply chain logistics, and mobile checkout optimization.

Instead of just publishing the transcript, you can:

1.Open a new NEURONwriter project for “AI shopping agents.”

2.Extract the relevant 10-minute segment from your transcript.

3.Paste it into the editor and use NEURONwriter recommendations to expand that specific segment into a standalone, 1,500-word article.

4.Link this new specific article back to the main podcast episode page.

This strategy allows you to build deep topical authority efficiently, using your podcast as the primary research engine for your entire SEO strategy.

The Results: Authority, Citations, and Traffic.

By adopting a structured workflow, you fundamentally change the ROI of your audio content.

When you optimize transcripts and show notes for semantic density, entity coverage, and hierarchical formatting, you ensure that your expertise is machine-readable. This means when a user asks an AI engine a complex question, the AI can easily parse your structured episode page, extract the factual insights, and cite your brand as the source.

You stop relying solely on podcast app algorithms for discovery and start capturing high-intent search traffic directly from Google and emerging AI interfaces.

FAQ

Q1: Can I just upload my raw AI transcript to my blog?

A: You can, but it will likely underperform. Raw transcripts lack semantic density and proper HTML structure (H2/H3 tags). To rank well, the transcript must be optimized and supplemented with structured summaries and NLP terms.

Q2: How does NEURONwriter help with podcast SEO?

A: NEURONwriter analyzes your transcript against top-ranking competitors and identifies missing semantic entities. It guides you in writing optimized introductions, show notes, and FAQs to bridge the gap between spoken conversation and search engine requirements.

Q3: Should I edit the transcript to include keywords?

A: Do not alter direct quotes to stuff keywords. Instead, use NEURONwriter to identify missing terms, and incorporate those terms naturally into the episode introduction, summary bullet points, and the FAQ section on the same page.

Q4: How long should an optimized podcast page be?

A: If it includes a full transcript, the page will naturally be long (often 3,000+ words). The key is to front-load the most important information (summaries, direct answers) at the top of the page so users and AI agents can find value immediately.

Q5: Can I turn one podcast into multiple articles?

A: Yes. A comprehensive interview can serve as the foundation for a topic cluster. You can extract specific segments of the transcript and use NEURONwriter to expand them into standalone, highly-targeted blog posts.

Q6: How often should I update an optimized podcast episode page?

A: Review each episode page every 90 days. Update the introduction and FAQ section to reflect any new NLP terms that NEURONwriter identifies as trending for your topic. Pages that receive periodic refreshes tend to compound rankings over time because the URL accumulates authority while the content stays current.

Q7: What is the difference between show notes and a full transcript page?

A: Show notes are a curated, structured summary of the episode typically 300–600 words written with SEO intent and optimized using NEURONwriter recommended entities. A full transcript page includes the complete spoken text alongside those optimized show notes. For maximum authority, publish both: a clean show notes section at the top of the page for users and AI agents, followed by the full timestamped transcript below for long-tail keyword coverage.